A new world of data demands a new take on data protection

Data storage and backup is evolving due to digitisation and remote working. A smart solution is critical for security, growth and innovation.

As data increases exponentially in volume, the types of data we use and the places where protected data is stored are diversifying.

Data storage, backup and resiliency is spiralling into complexity and organisations I’m talking to are finding that their existing IT infrastructure can’t keep up.

More data means new challenges for data storage

When I look back even five to seven years ago, managing data storage and protection was a lot simpler. Back then, it was easier to predict and manage data use as there were only a limited number of applications generating data across key application types like enterprise applications, and collaboration and user generated file libraries, and we just had to build systems around these basic requirements.

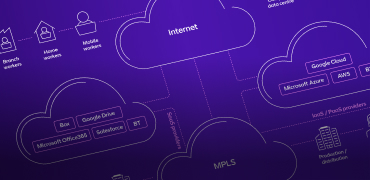

Today’s super-connected and digitised world is a data factory, and that’s transforming data storage and backup. When I talk to organisations, it’s clear that they have data everywhere. Workers use a wide range of devices on a daily basis, including personal ones, and remote workers can generate data across multiple networks in just one day. Machine-to-machine communications and the IoT are pumping out yet more data transactions. The types of data stored is expanding, too, whether it be live feeds, industry specific rich media content or sensor data from IoT gateways. This adds a lot of complexity to the requirements for backup systems and data recovery processes. At the same time, users and systems are requiring instant flexibility when it comes to data access and storage, often with high volume transactions.

The average organisation has data in multiple locations, in multiple formats, across different organisations, meaning they rely on a range of different backup systems. This can be disjointed, complex to oversee and increase running costs. And, from a security point of view, the CISOs I talk to are worried that the more data access and storage points they have, the more vulnerable their critical data is to attack.

In response to the increased demands for remote data storage and access, many organisations are turning to cloud-based solutions. But, as I point out when we explore the options, this also has new implications and requires a rethink of how they handle data backup.

So, given all these challenges and changes, what is required in this new age of data protection?

The 5 factors to consider for data protection

I believe organisations need to reassess how they manage their data as a matter of priority. Here are the five aspects to consider for a robust data protection solution:

1. A smart and robust backup system

There should be checks to make sure the backup system is actually recoverable and that it will do the job. Smart systems use data intelligence, meaning they use current backup data to test the system, rather than old data which is unstructured and unvalidated. During testing you need to be able to mimic the actual production environment, making sure the backup system is always prepared and isn’t out of date.

2. Flexibility

It’s important to be able to cover a wide coverage of workloads, from all devices and locations. The solution should be able to cover VMs, containers, physical devices, storage platforms, public clouds and applications all using a single easy to use framework. You also need the flexibility to recover what exactly you need, from just one file to a whole estate.

3. A single pane view of your data

Your data backup tool needs to work as an aggregated single glass pane to manage the back up of your entire estate over different devices. You don’t want to be using different systems with separate interfaces to view the status of your data protection. Look for one single overview, even if you’re using multiple storage solutions such as a hybrid cloud set up.

4. Automation

Using one solution for all your data backup reduces the need for multiple manual processes. Ideally, all data protection should be automated and inbuilt, saving your organisation time and money, and ensuring round-the-clock protection.

5. Security from attacks

Multiple levels of security is key. Whether it be encryption, scanning of backup copies or immutable features, customers are looking at security features with much more interest. Data isolation capability is critical for security reasons. Smart systems use a ‘WORM’ solution (write once and read many), this is like an ‘air gap’ mode with a vault that keeps data locked away from the rest of the network and prevents it from being overwritten. So, even if there is a ransomware attack, there’s always a clean set of read-only data that can be used to restore the estate.

A new age for data protection

Hybrid Cloud Backup and Replication from BT protects all your workloads in one solution, without the need for multiple, complex technology stacks. It’s hardware agnostic and designed to easily handle high levels of automation and pure hybrid architectures. It removes complexity from backup and replication, operating on a global scale with industry-leading service level agreements. Security is built in, to mitigate ransomware attacks, check for errors and vulnerabilities, and encrypt your data at rest as well as in transit. We’ll deliver it at your choice of location, using a commercial model that can help you cut costs and CapEx.

To find out more about how we can protect and manage your data, please contact your account manager.